What is observability and why does it matter?

ESPHome is an amazing tool. Devices you build with it are very useful. But how do you notice when they don't work? You would eventually notice if a temperature sensor stopped working and your climate control automations aren't working as intended. While that's not ideal, it gets worse: what about a sensor part of an alarm system, detecting that a window is open?

Observability is the ability to understand what a system does, its state... through data it can output, mostly metrics and logs. Prometheus, the industry standard metrics and monitoring tool, is essentially collecting those metrics, and storing them in a database. From there, you can look at the state of your system (in this case, ESPHome devices) over time, present this in a dashboard with Grafana, fire alerts if a metric goes beyond a certain threshold...

This post will assume that you're using Prometheus, but it isn't the only option. Prometheus metrics are a very simple, standardised format. Other tools like VictoriaMetrics are also able to scrape those metrics, for example. Most of the contents of this post should apply just the same.

Exposing metrics

ESPHome has a prometheus component. Enabling it also requires enabling the web_server component:

web_server:

prometheus:This will expose all the entities your device has as Open Metrics. For example:

#TYPE esphome_sensor_value gauge

#TYPE esphome_sensor_failed gauge

esphome_sensor_failed{id="onboard_temperature",node="rack-fan",name="Onboard temperature"} 0

esphome_sensor_value{id="onboard_temperature",node="rack-fan",name="Onboard temperature",unit="°C"} 38.87This is a good start, but we can get more interesting data. ESPHome comes with a variety of diagnosis components. The debug component exposes some basic system information:

debug:

sensor:

- platform: uptime

name: "Uptime"

entity_category: diagnostic

update_interval: 60s

- platform: debug

free:

name: "Heap free"

entity_category: diagnostic

block:

name: "Heap max block"

entity_category: diagnostic

loop_time:

name: "Loop time"

entity_category: diagnostic

cpu_frequency:

name: "CPU frequency"

entity_category: diagnostic

text_sensor:

- platform: debug

device:

name: "Device info"

reset_reason:

name: "Reset reason"We can also get data about connectivity. For WiFi devices, we have the wifi_info and wifi_signal components:

sensor:

- platform: wifi_signal

name: "WiFi signal"

entity_category: diagnostic

text_sensor:

- platform: wifi_info

ip_address:

name: "IP address"

entity_category: diagnostic

mac_address:

name: "MAC WiFi address"

entity_category: diagnosticAnd similarly for ethernet, there's ethernet_info:

text_sensor:

- platform: ethernet_info

ip_address:

name: ESP IP Address

address_0:

name: ESP IP Address 0

address_1:

name: ESP IP Address 1

dns_address:

name: ESP DNS AddressThis gives us quite a bit of data about what's going on those devices. If you have multiple devices configurations in the same repository, a convenient way to handle this is to save those in e.g. common/connectivity/ethernet.yaml, or common/observability.yaml, and in each individual device configuration, pick and mix what you need for this device:

packages:

base: !include

file: common/observability.yaml

ethernet: !include

file: common/connectivity/ethernet.yamlReading the output

To validate these changes, you can open http://yourdevice.yourdomain.com/metrics. You should be greeted by a nice wall of text. Have a look around, it's not too complex - each line starting with # is a comment (the default comments specify the type of each metric), and other lines are the actual metrics. To re-use the example from above:

#TYPE esphome_sensor_value gauge

#TYPE esphome_sensor_failed gauge

esphome_sensor_failed{id="onboard_temperature",node="rack-fan",name="Onboard temperature"} 0

esphome_sensor_value{id="onboard_temperature",node="rack-fan",name="Onboard temperature",unit="°C"} 38.87We have two metrics here, esphome_sensor_value and esphome_sensor_failed. They're both of type gauge. They both are about a sensor of id onboard_temperature, on the node rack-fan, and have the friendly name Onboard temperature. The failed metric has a value of 0 (which means it isn't failing), and the value metric tells us the unit is °C, and the value is 38.87. Nice and toasty.

Multiple metrics can (and likely will) have the same name, e.g. you'll likely have multiple instances of esphome_sensor_value. However, it's guaranteed that the labels (id, node...) are a unique combination for each instance of that metric.

Scraping the metrics

Now this is all well and good, we have devices exposing metrics. But how do we read them? We need to tell Prometheus where they are. There are multiple approaches there, from simplest to most automated.

Listing all ESPHome devices in Prometheus' configuration

This is the most obvious solution, but requires changing and reloading Prometheus' configuration every single time you add/remove/rename a ESPHome device. Here's what it could look like. In the Prometheus configuration:

scrape_configs:

- job_name: esphome

metrics_path: /metrics

static_configs:

- targets:

- bedroom-light.example.com:80

- living-room-sensor.example.com:80

- rack-fan.example.com:80It's as simple as it gets - if the list doesn't change too often, it's an easy option. Prometheus will by default scrape metrics every 30 seconds for each of these targets.

Using file_sd_config

While fundamentally similar, this is a slight improvement over the previous solution. We still have a hard-coded list of devices, but it now lives in its own file (which we could for example generate from a CI pipeline), and Prometheus would pick up any changes without having to reload it. This is what the Prometheus configuration looks like:

scrape_configs:

- job_name: esphome

metrics_path: /metrics

file_sd_configs:

- files:

- /etc/prometheus/esphome_targets.jsonAnd the esphome_targets.json:

[

{

"targets": [

"bedroom-light.example.com:80",

"living-room-sensor.example.com:80",

"rack-fan.example.com:80"

]

}

]Leveraging mDNS

This approach shifts the discovery process entirely. Instead of providing Prometheus with a list of targets to scrape, the targets advertise themselves, using mDNS. The viability of this option is fairly dependent on your network infrastructure — some more complex networks might have difficulties with e.g. forwarding advertisements over different VLANs. As a rule of thumb, if Home Assistant is able to discover your ESPHome devices automatically, this option would work well for you, as mDNS is what allows Home Assistant to do this discovery as well. A quick sanity check, if you're on Linux/macOS and have avahi-browse available:

avahi-browse -r _esphomelib._tcpThis should list the different ESPHome devices on your local network.

To make this work, we need two things:

- Have the devices advertising their metrics service (the

_esphomelib._tcpadvertisements mentioned above are for the core ESPHome API on a different port), - Tell Prometheus what mDNS address to listen to.

The first one is trivial, as we can modify the behaviour of the mdns ESPHome component:

mdns:

services:

- service: "_prometheus-http"

protocol: "_tcp"

port: 80While this is enough, we can leverage TXT records to pass more metadata:

mdns:

services:

- service: "_prometheus-http"

protocol: "_tcp"

port: 80

txt:

path: /metrics

version: !lambda 'return ESPHOME_VERSION;'

mac: !lambda 'return get_mac_address();'

platform: !lambda 'return ESPHOME_VARIANT;'

board: !lambda 'return ESPHOME_BOARD;'

network: !lambda |-

#ifdef USE_WIFI

return std::string("wifi");

#endif

#ifdef USE_ETHERNET

return std::string("ethernet");

#endif

return std::string("unknown");

project_name: !lambda 'return ESPHOME_PROJECT_NAME;'

project_version: !lambda 'return ESPHOME_PROJECT_VERSION;'These additional fields aren't strictly needed, but add some more information to help diagnosing if something goes wrong.

Next, the Prometheus configuration. Unfortunately, Prometheus doesn't support mDNS directly. There are multiple tools that exist to fill that bridge, e.g. 1 or 2. My preference is my own prometheus-mdns-sd - it listens to the mDNS advertisements, and exposes a /targets endpoint listing all the discovered devices, which Prometheus can read directly. The Prometheus configuration is pretty short and never needs to change:

scrape_configs:

- job_name: esphome

metrics_path: /metrics

http_sd_configs:

- url: http://prometheus-mdns-sd:8080/targetsValidating the scraping

Whichever option you went with, you should now have some metrics scraped by Prometheus. To query them, open the Prometheus web UI. You can query data across your devices, e.g.:

esphome_sensor_value{id="wifi_signal"}| Metric | Value |

|---|---|

| esphome_sensor_value{id="wifi_signal", instance="desk", job="esphome", name="WiFi signal", node="desk", unit="dBm"} | -48 |

| esphome_sensor_value{id="wifi_signal", instance="rack-fan", job="esphome", name="WiFi signal", node="rack-fan", unit="dBm"} | -36 |

Alerting

Once we're collecting metrics, we can leverage them to trigger alerts. Prometheus' alerting rules let you define conditions that, when met, fire alerts to Alertmanager. Let's go through a few useful rules.

Device down

The most fundamental alert. Prometheus automatically tracks whether a scrape target is reachable via the up metric. If a device stops responding for 5 minutes, something is likely wrong:

- alert: ESPHomeDeviceDown

expr: |

up{job="esphome"} == 0

for: 5m

labels:

severity: critical

annotations:

summary: "ESPHome device {{ $labels.instance }} is down"

description: "ESPHome device {{ $labels.instance }} has been unreachable for more than 5 minutes."Unexpected reboots

A device that just rebooted isn't necessarily broken, but you probably want to know about it — especially if it keeps happening. This rule catches any device with an uptime under 5 minutes:

- alert: ESPHomeDeviceReboot

expr: |

esphome_sensor_value{id="uptime",job="esphome"} > 0

and

esphome_sensor_value{id="uptime",job="esphome"} < 300

labels:

severity: info

annotations:

summary: "ESPHome device {{ $labels.node }} rebooted"

description: "ESPHome device {{ $labels.node }} recently rebooted (uptime {{ $value }}s)."Entity failures

ESPHome exposes a _failed metric for each entity. This catches any entity that has been in a failed state for more than 5 minutes — a sensor that can't be read, an I2C device that isn't responding, etc.:

- alert: ESPHomeEntityFailed

expr: |

max by (node, id, name) ({__name__=~"esphome_.*_failed",job="esphome"}) == 1

for: 5m

labels:

severity: warning

annotations:

summary: "ESPHome entity {{ $labels.name }} failed on {{ $labels.node }}"

description: "ESPHome entity {{ $labels.name }} ({{ $labels.id }}) on {{ $labels.node }} has been failing for more than 5 minutes."Firmware updates available

This one depends on whether you have a mechanism for updates, and whether you apply them automatically or not. If updates are supposed to be applied hourly and haven't been applied for over an hour, it might point to an issue somewhere. We'll set up exactly this kind of automated update mechanism in a later post in this series.

- alert: ESPHomeFirmwareUpdate

expr: |

esphome_update_entity_state{job="esphome",value!="none"} == 1

for: 1h

labels:

severity: info

annotations:

summary: "ESPHome device {{ $labels.node }} has a firmware update"

description: "ESPHome device {{ $labels.node }} has a firmware update available."Low heap memory

ESP32s and ESP8266s don't have much memory to spare. If the free heap memory gets too low, it might be a sign to look into the firmware configuration.

- alert: ESPHomeLowHeapMemory

expr: |

esphome_sensor_value{id="heap_free",job="esphome"} < 10000

for: 10m

labels:

severity: warning

annotations:

summary: "ESPHome device {{ $labels.node }} heap memory low"

description: "ESPHome device {{ $labels.node }} has only {{ $value }} bytes of free heap memory."High loop time

ESPHome's main loop should run fast. If it consistently takes more than 100ms, something is blocking — possibly a misbehaving component or too many entities:

- alert: ESPHomeHighLoopTime

expr: |

esphome_sensor_value{id="loop_time",job="esphome"} > 100

for: 10m

labels:

severity: warning

annotations:

summary: "ESPHome device {{ $labels.node }} loop time high"

description: "ESPHome device {{ $labels.node }} main loop is taking {{ $value }}ms."Weak WiFi signal

A signal below -80 dBm is unreliable. This often manifests as intermittent failures rather than a clean disconnection, making it harder to diagnose:

- alert: ESPHomeWeakWiFiSignal

expr: |

esphome_sensor_value{id="wifi_signal",job="esphome"} < -80

for: 10m

labels:

severity: warning

annotations:

summary: "ESPHome device {{ $labels.node }} WiFi signal weak"

description: "ESPHome device {{ $labels.node }} WiFi signal is {{ $value }} dBm."Dashboard

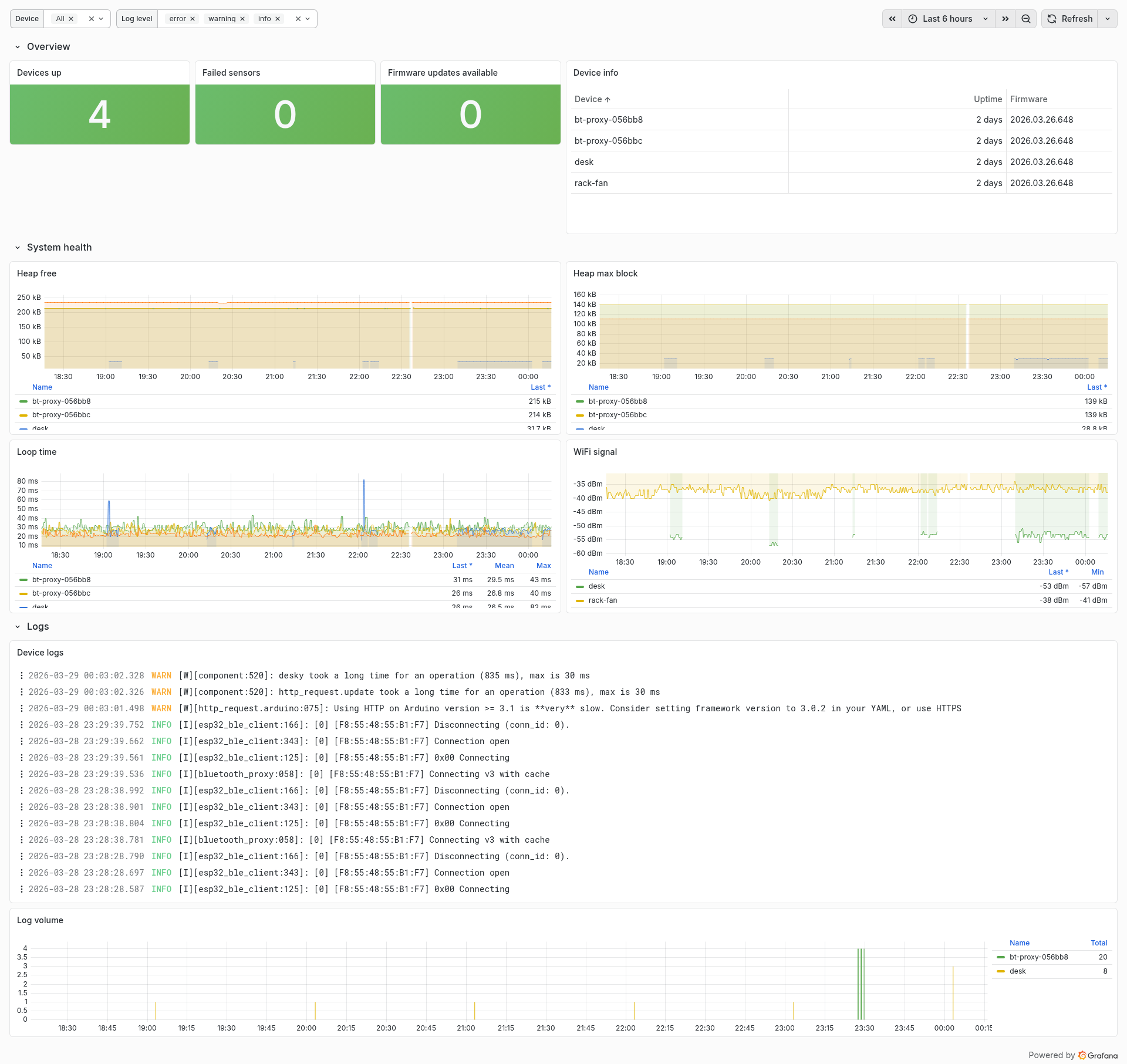

Now that we're collecting metrics, we can also visualise them in a dashboard. While alerts are typically a better way of detecting issues, having a dashboard ready to diagnose why something went wrong, or identifying patterns, can come in handy. With the metrics we're now collecting, this is the type of dashboard we can create:

We now have a full metrics pipeline — from ESPHome devices exposing data, through Prometheus scraping and storing it, to alerts catching common failure modes. The current monitoring we have doesn't support logs as displayed here — this will be the subject of the next article in the series!

Comments